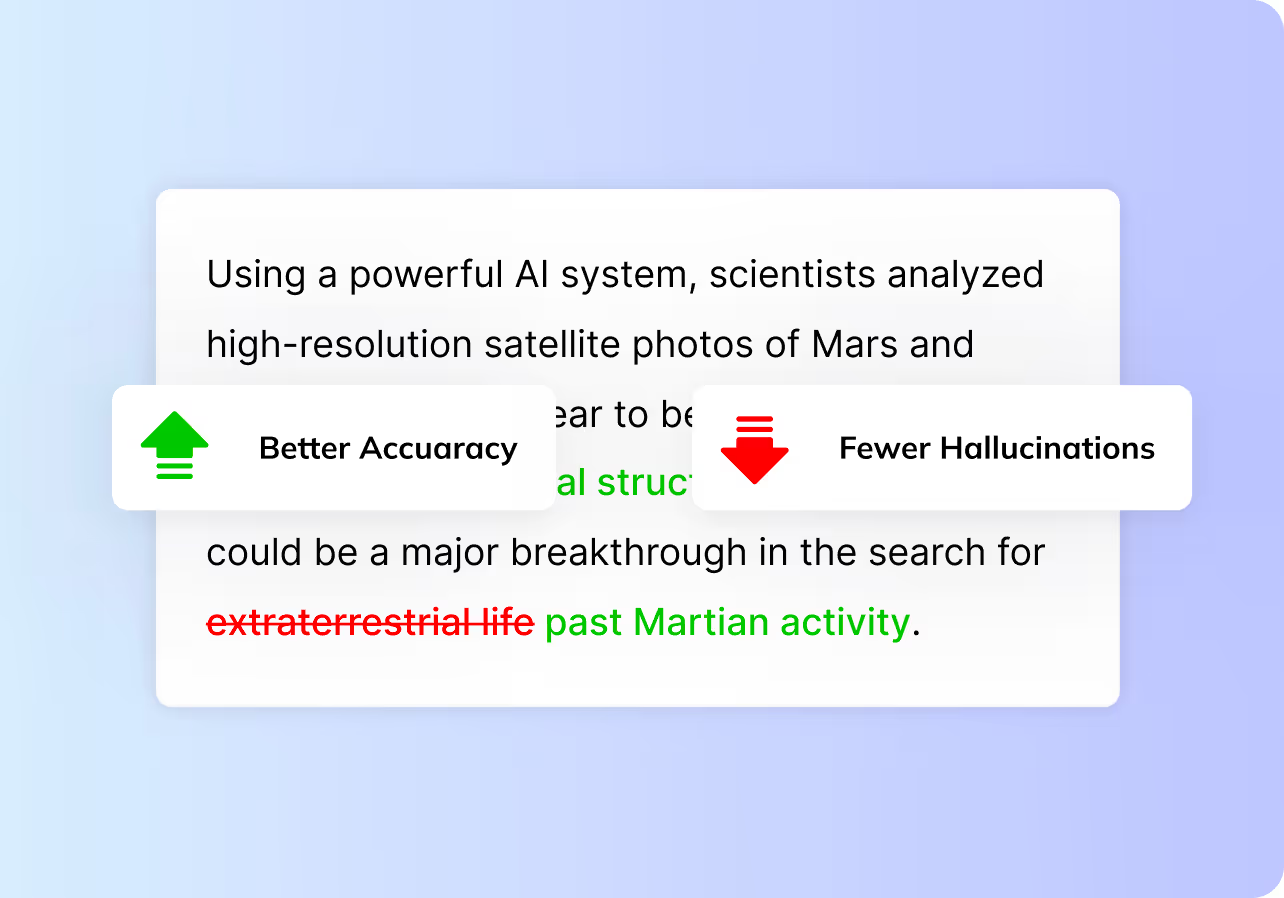

More accurate answers, fewer hallucinations

Combine graph retrieval with vector search to improve reasoning for complex questions and keep answers grounded in your data.

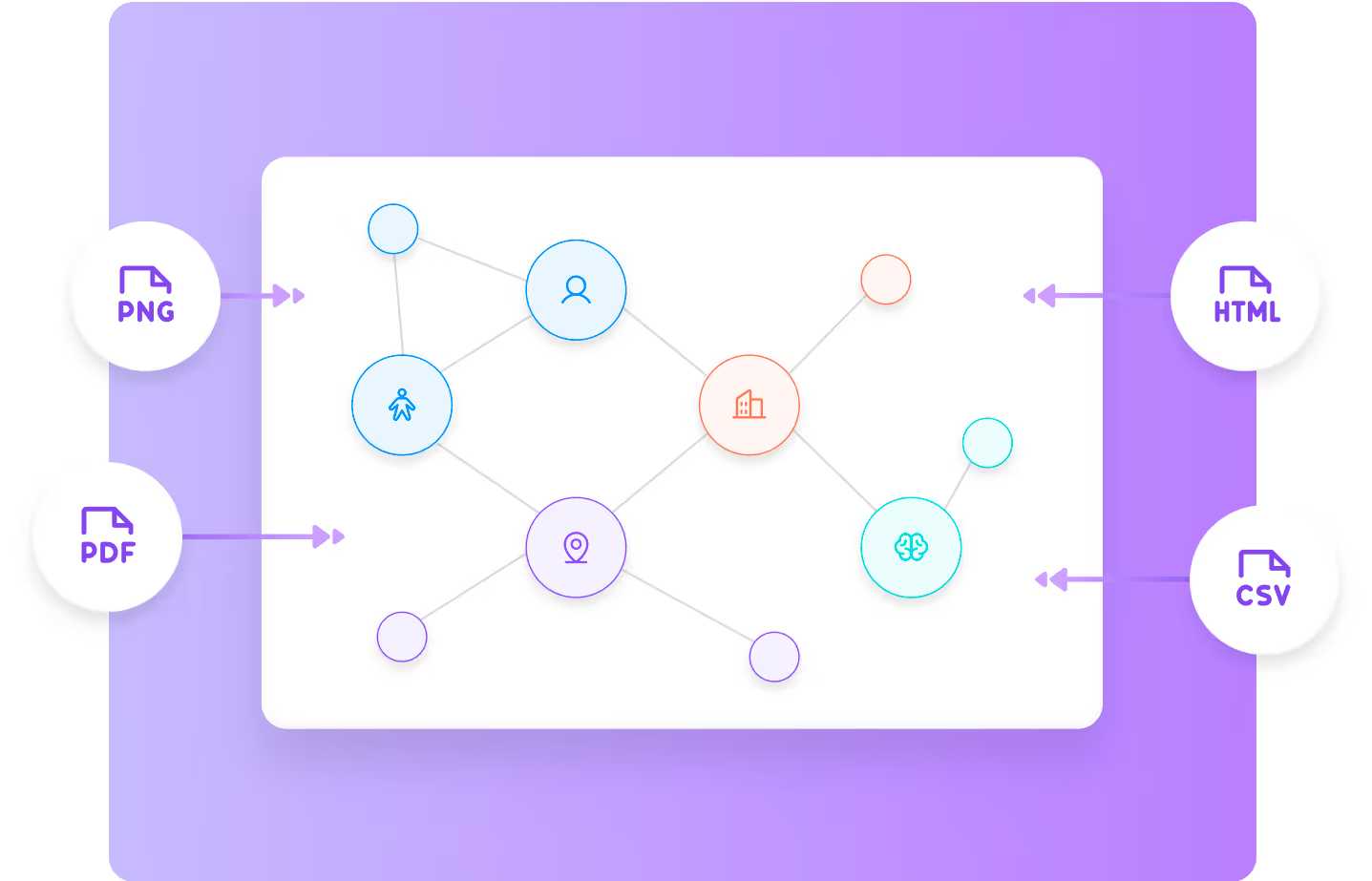

GraphRAG brings structure to retrieval for answers you can trust.

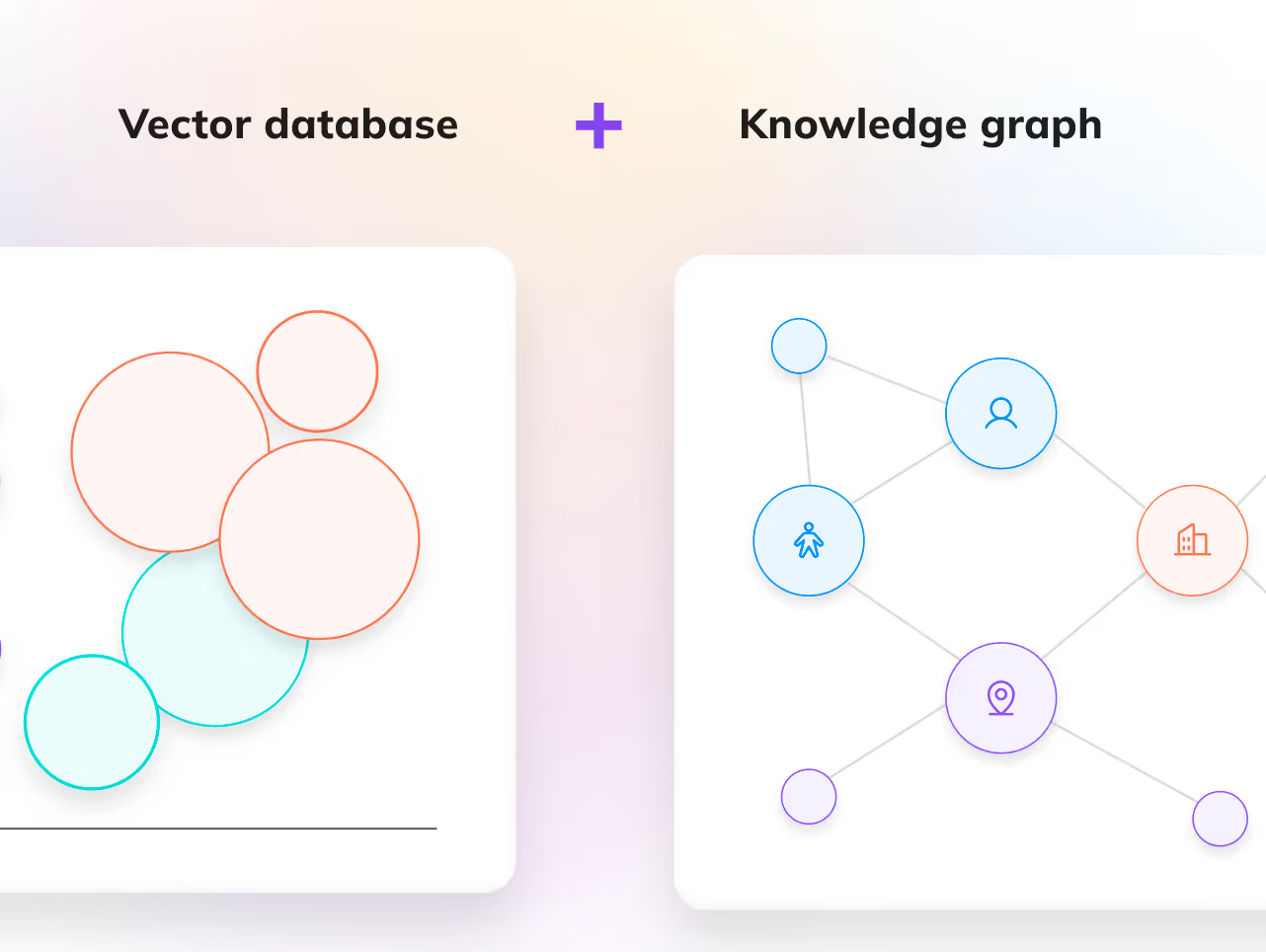

GraphRAG combines vector search with knowledge graphs to reason over entities and relationships within your data, improving accuracy, grounding, and transparency for complex questions.

Higher accuracy on complex queries

Uses entity relationships and graph structure to retrieve the most relevant context.

Fewer hallucinations, better grounding

Answers are anchored in your knowledge graph, not inferred from loosely connected content.

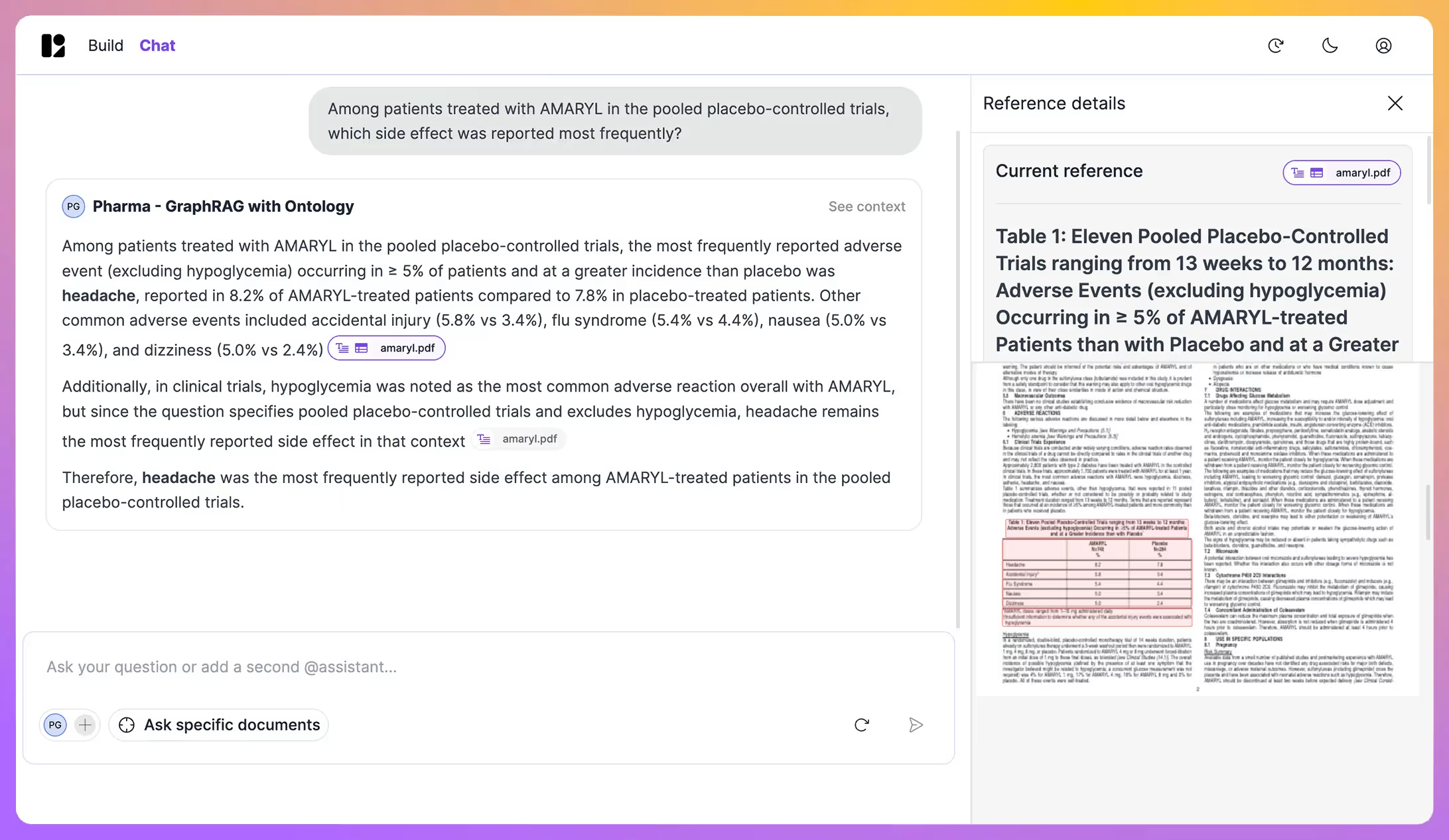

Explainable reasoning, not black-box retrieval

You can trace how information is connected and understand why an answer was produced.

Trusted by leading teams managing large-scale document knowledge.

Deep expertise on GraphRAG and structured retrieval

Patrick Duvaut

Head of Innovation

Patrick Duvaut

Head of Innovation

Patrick Duvaut

Head of Innovation

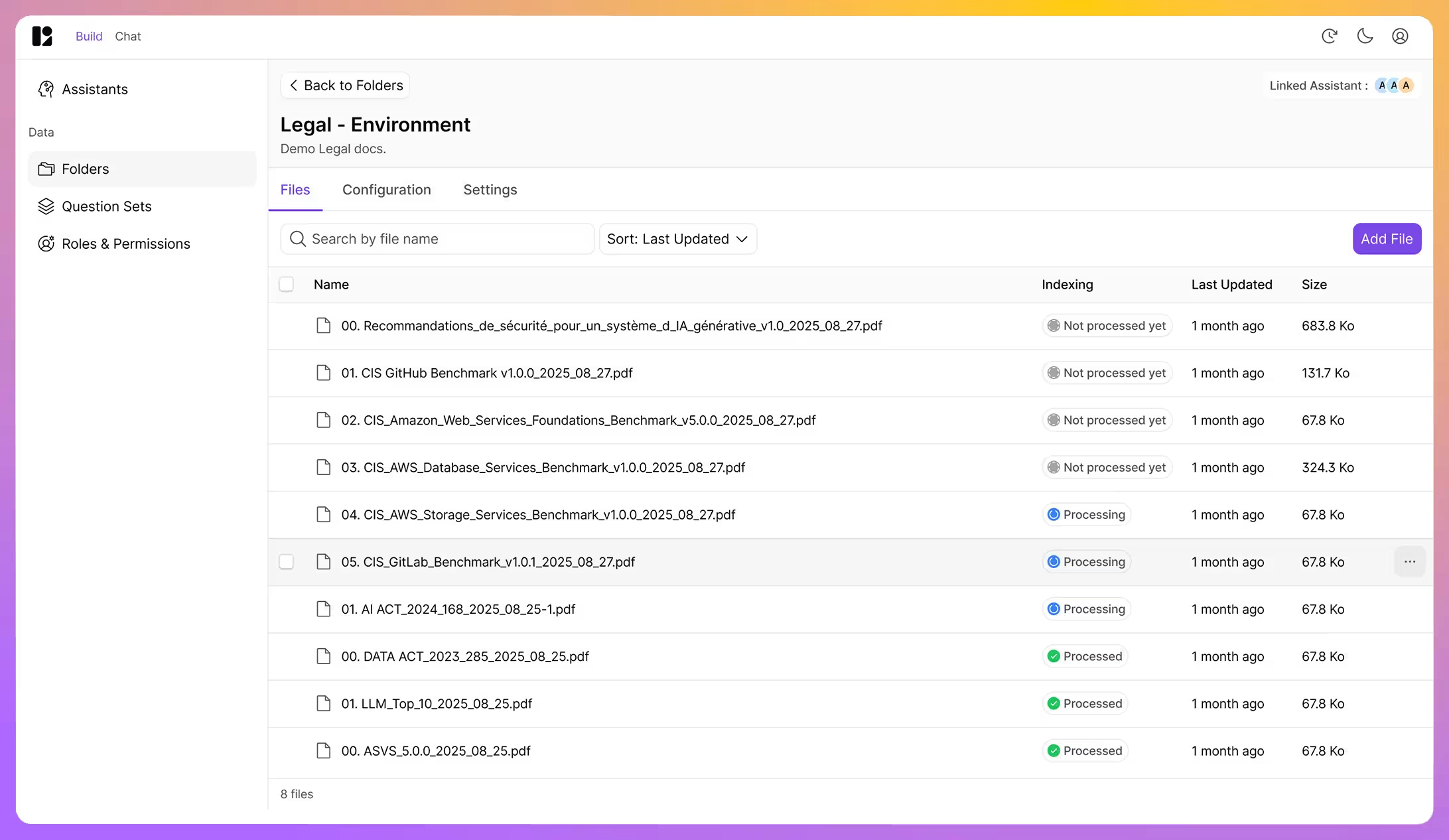

See how GraphRAG improves accuracy and reduces hallucinations

Discover how GraphRAG combines structured knowledge and retrieval to deliver accurate, traceable answers at scale.

.png)