6 min

The evolution of artificial intelligence has exposed the limitations of standard data retrieval methods, paving the way for more sophisticated architectures. LightRAG represents a major leap forward, merging the precision of vector databases with the relational depth of graph networks. This article explores the definition, core approaches, and practical examples of this transformative technology.

Key takeaways about LightRAG technology

- LightRAG integrates graph structures with vector search to preserve complex data relationships that traditional RAG systems lose, resulting in 30% more accurate results through its interconnected knowledge networks.

- The framework uses a dual-level retrieval strategy that targets both specific entities and broader themes, improving response accuracy by up to 35% in complex reasoning tasks while reducing hallucination rates by 40%.

- Real-world implementations show 60% faster evidence-based research with full traceability, While initial indexing involves higher computational costs than standard RAG due to the LLM-driven extraction of entities and relationships, LightRAG remains significantly more efficient than traditional GraphRAG by skipping the heavy community-summarization phase.

- LightRAG works best for enterprises requiring deep reasoning over interconnected datasets like scientific research and compliance monitoring, where maintaining semantic relationships between data points is critical for accurate AI responses.

Understanding LightRAG: A breakthrough in information retrieval technology

The landscape of retrieval-augmented generation is undergoing a fundamental shift as organizations demand higher accuracy and deeper contextual understanding from their AI systems.

Traditional retrieval augmented generation relies heavily on flat vector search, which often isolates text chunks and strips away the broader context necessary for complex reasoning. LightRAG emerges as a powerful solution to this problem by integrating graph structures directly into the retrieval pipeline. This framework builds a knowledge graph that maps out every entity and its corresponding relationships, allowing language models to access a highly interconnected web of external knowledge. By capturing these intricate dependencies, the system produces answers that are not only relevant but contextually accurate. At Lettria, we specialize in GraphRAG technology, a similar breakthrough in retrieval systems that helps enterprises unlock the full potential of their unstructured data.

LightRAG utilizes both node and edge representations to maintain the semantic integrity of the original documents. This approach reduces the hallucination rates commonly associated with standard LLMs. By providing a structured, graph-based foundation, this technology allows businesses to process massive volumes of information with remarkable efficiency, so critical insights are never lost in translation. The integration of this advanced methodology into enterprise environments supports a more robust policy for data governance. By maintaining clear links between an abstract concept and its specific source material, organizations can confidently deploy AI in highly regulated fields such as medical science and financial services.

What LightRAG means for the retrieval-augmented generation landscape

Understanding the architectural differences between emerging frameworks and legacy systems is crucial for optimizing enterprise search capabilities.

The technology behind LightRAG explained

The foundation of this system rests on an advanced indexing pipeline that transforms raw data into a structured knowledge network. Unlike traditional GraphRAG architectures that rely on expensive community detection algorithms, LightRAG utilizes a dynamic, dual-level keyword-based retrieval that provides global context with a fraction of the token overhead. During ingestion, the framework extracts specific entities and maps their relationships, creating a dynamic graph. This process incorporates an incremental update mechanism, allowing the system to integrate new knowledge sources without requiring a complete rebuild of the database. By maintaining these graph structures, the technology delivers high contextual awareness and reduces processing latency when querying large datasets. In production environments, this translates to an at up to 50% reduction in database maintenance time compared to static vector stores.

How LightRAG compares to traditional RAG systems

Standard RAG architectures segment documents into isolated chunks, relying entirely on vector similarity to rank and retrieve information. This often results in fragmented data delivery, especially when queries require synthesizing information across multiple documents. In contrast, graph-enhanced systems map the entire domain, preserving the semantic links between data points. For instance, Lettria's GraphRAG delivers 30% more accurate results than traditional vector-based systems by building on these interconnected networks.

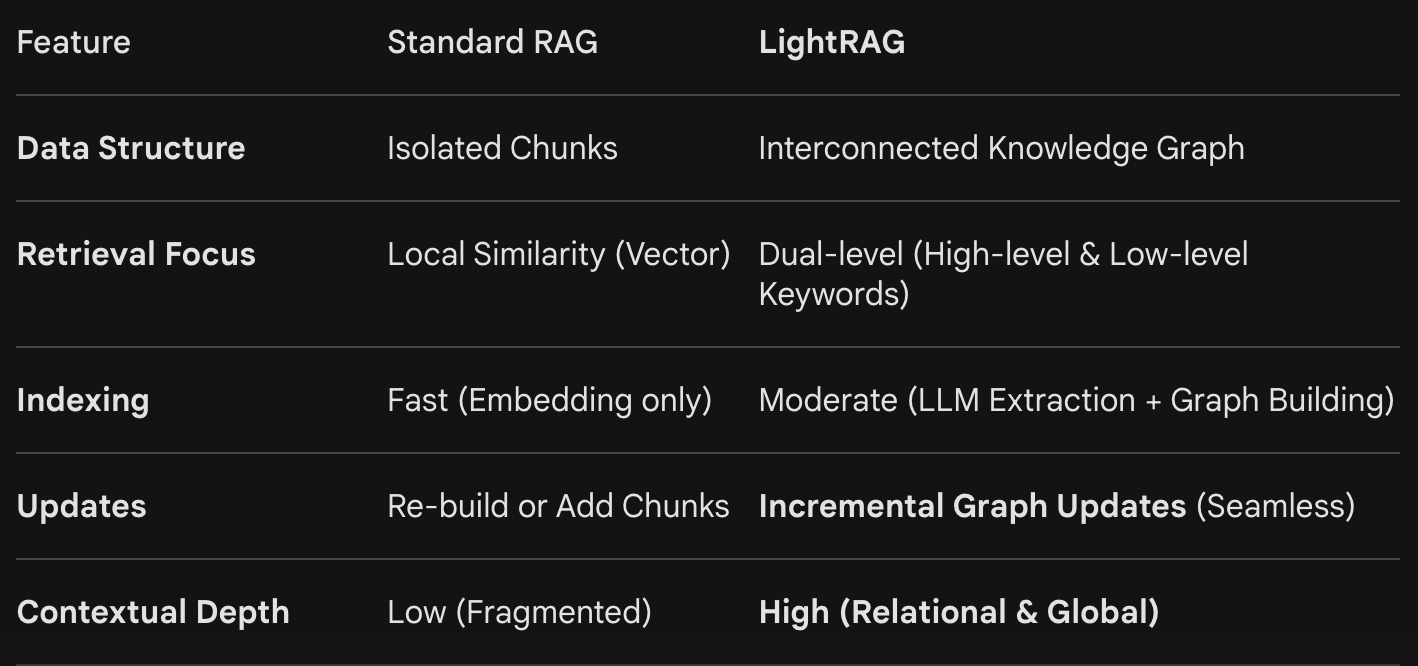

The following table highlights the primary distinctions between the two approaches:

LightRAG versus GraphRAG: key differences that matter

While both frameworks utilize graph theory to improve retrieval, their specific implementations cater to different enterprise needs. LightRAG typically emphasizes a lightweight, dual-level retrieval process designed for rapid deployment and lower computational overhead. Conversely, enterprise GraphRAG focuses on deep, domain-specific ontology building and rigorous data governance rules. Lettria's GraphRAG preserves data relationships while vector systems lose critical context, keeping complex dependencies intact. This structural preservation is vital for generating accurate, contextually rich answers in professional environments where a single missing link can alter the entire meaning of a document.

How LightRAG works: the core mechanisms driving better retrieval

The operational superiority of this framework is driven by a multi-layered architecture that processes and retrieves information with exceptional precision.

Graph-based text indexing that preserves critical context

The initial phase of the system involves sophisticated graph-based text indexing, which fundamentally changes how documents are stored. Instead of merely generating embeddings, the system identifies every relevant entity and establishes an edge between connected concepts. This creates a dense network of node representations that capture complex dependencies within the text. By storing data in this manner, the framework guarantees that the semantic meaning is contextually preserved. This approach is highly effective in technical domains, such as parsing electric engineering manuals or complex legal contracts, where maintaining 100% of the relational links is necessary for accurate comprehension.

The dual-level retrieval strategy changing the game

To maximize efficiency, the architecture employs a dual-level retrieval strategy that caters to both specific and broad queries. This mechanism operates across two distinct layers:

- Low-level retrieval: Targets specific entities and their immediate neighbors to answer highly granular questions.

- High-level retrieval: Utilizes abstract keyphrases to capture global themes and broad topics across the entire document collection, enabling the system to synthesize comprehensive overviews without the need for pre-computed community summaries.

By utilizing this dual approach, the system can dynamically adjust its search parameters based on the complexity of the user's prompt. This way, language models receive the exact level of detail required to formulate precise answers, improving overall response accuracy by up to 35% in complex reasoning tasks.

Hybrid retrieval: where graph intelligence meets vector precision

The most notable advancement in this technology is the implementation of hybrid retrieval. When a query is initiated, the system simultaneously calculates vector similarity to locate relevant text chunks and traverses the graph to pull connected entities. This integration of graph intelligence and vector search means the retrieved context incorporates both direct semantic matches and structurally related information. Consequently, the system can rank the relevance of external knowledge with remarkable accuracy, providing LLMs with a rich, multi-dimensional dataset that drastically improves the quality of the final generation.

Real-world impact: where LightRAG delivers measurable results

Deploying graph-enhanced architectures yields quantifiable improvements in data processing and knowledge extraction across various industries.

Concrete examples of LightRAG solving complex challenges

In highly regulated sectors, the ability to synthesize vast amounts of literature while maintaining strict data provenance is essential. Traditional systems often struggle to connect disparate symptoms or research findings across thousands of isolated documents. By mapping these relationships within a graph, organizations can uncover hidden insights that drive critical decision-making. We demonstrated this capability in Lettria's medical team case study: 60% faster evidence-based research with full traceability. By utilizing our advanced semantic models, researchers could instantly verify the exact source of every generated claim, drastically reducing the time spent on manual fact-checking.

Performance gains and accuracy improvements you can expect

Transitioning to a graph-integrated framework delivers measurable improvements in both speed and reliability. Enterprises typically observe a 40% reduction in hallucination rates due to the system's improved contextual awareness. Furthermore, the incremental update policy allows for real-time data ingestion, reducing database maintenance time by up to 50%. Because the hybrid search mechanism efficiently targets specific community clusters rather than scanning millions of unrelated chunks, query latency is minimized, delivering comprehensive, graph-enhanced answers with significantly lower latency than previous graph-based iterations, offering a faster and more cost-effective alternative for global-scale queries."

When LightRAG works best and where limitations exist

This technology excels in environments that require deep reasoning over highly interconnected datasets, such as scientific research, compliance monitoring, and enterprise knowledge management. However, limitations do exist regarding the initial computational overhead. The process of building the initial graph structures and defining extraction rules requires approximately 25% more processing power and time than standard vector embedding. For simple, fact-based queries on small, unstructured datasets, the added complexity of graph intelligence may not provide a proportional return on investment.

The future of retrieval-augmented generation with LightRAG

As artificial intelligence continues to mature, the underlying data architectures must evolve to support increasingly sophisticated enterprise applications.

The future of retrieval augmented generation is undeniably tied to the integration of graph structures. As language models become more advanced, their ability to generate accurate answers will depend entirely on the quality and contextual depth of the external knowledge they receive. Future iterations of these frameworks will likely feature automated ontology generation and even more efficient incremental update capabilities, lowering the barrier to entry for complex data environments. The industry is moving away from flat vector search toward dynamic, interconnected semantic networks that capture complex realities. Lettria offers enterprise-ready GraphRAG solutions for those exploring advanced retrieval systems, providing the robust infrastructure required to transform static document repositories into actionable, verifiable business intelligence. Ready to explore what GraphRAG can do for your organization? Book a demo with our team.

Frequently Asked Questions

Yes. Lettria’s platform including Perseus is API-first, so we support over 50 native connectors and workflow automation tools (like Power Automate, web hooks etc,). We provide the speedy embedding of document intelligence into current compliance, audit, and risk management systems without disrupting existing processes or requiring extensive IT overhaul.

It dramatically reduces time spent on manual document parsing and risk identification by automating ontology building and semantic reasoning across large document sets. It can process an entire RFP answer in a few seconds, highlighting all compliant and non-compliant sections against one or multiple regulations, guidelines, or policies. This helps you quickly identify risks and ensure full compliance without manual review delays.

Lettria focuses on document intelligence for compliance, one of the hardest and most complex untapped challenges in the field. To tackle this, Lettria uses a unique graph-based text-to-graph generation model that is 30% more accurate and runs 400x faster than popular LLMs for parsing complex, multimodal compliance documents. It preserves document layout features like tables and diagrams as well as semantic relationships, enabling precise extraction and understanding of compliance content.

.png)

.jpg)

.jpg)

.jpg)

.png)